Judge Failla’s opinion in Dow Jones v. Perplexity doesn’t just keep the case alive—it frames RAG itself as the act of copying, and raises the specter of inducement liability under Grokster.

Although Judge Katherine Polk Failla’s August 21, 2025 opinion in Dow Jones & Co. v. Perplexity is technically a procedural ruling denying Perplexity’s motions to dismiss or transfer, Judge Failla offers an unusually candid window into how the Court may view the substance of the case. In particular, her treatment of retrieval-augmented generation (RAG) is striking: rather than describing it as Perplexity’s background plumbing, she identified it as the mechanism by which copyright infringement and trademark misattribution allegedly occur.

Remember, Perplexity’s CEO described the company to Forbes as “It’s almost like Wikipedia and ChatGPT had a kid.” I’m still looking for that attribution under the Wikipedia Creative Commons license.

As readers may recall, I’ve been very interested in RAG as an open door for infringement actions, so naturally this discussion caught my eye. So we’re all on the page, retrieval-augmented generation (RAG) uses a “vector database” to expand an AI system’s knowledge beyond what is locked in its training data, including recent news sources for example.

When you prompt a RAG-enabled model, it first searches the database for context, then weaves that information into its generated answer. This architecture makes outputs more accurate, current, and domain-specific, but also raises questions about copyright, data governance, and intentional use of third-party content mostly because RAG may rely on information outside of its training data. Like if I queried “single bullet theory” the AI might have a copy of the Warren Commission report, but would need to go out on the web for the latest declassified JFK materials or news reports about those materials to give a complete answer.

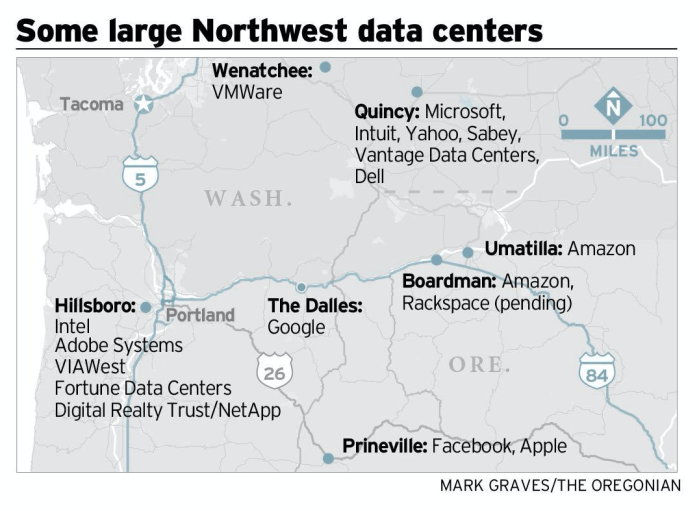

You can also think of Google Search or Bing as a kind of RAG index—and you can see how that would give search engines a big leg up in the AI race, even though none of their various safe harbors, Creative Commons licenses, Google Books or direct licenses were for this RAG purpose. So there’s that.

Judge Failla’s RAG Analysis

As Judge Failla explained, Perplexity’s system “relies on a retrieval-augmented generation (‘RAG’) database, comprised of ‘content from original sources,’ to provide answers to users,” with the indices “comprised of content that [Perplexity] want[s] to use as source material from which to generate the ‘answers’ to user prompts and questions.’” The model then “repackages the original, indexed content in written responses … to users,” with the RAG technology “tell[ing] the LLM exactly which original content to turn into its ‘answer.’” Or as another judge once said, “One who distributes a device with the object of promoting its use to infringe copyright, as shown by clear expression or other affirmative steps taken to foster infringement, going beyond mere distribution with knowledge of third-party action, is liable for the resulting acts of infringement by third parties using the device, regardless of the device’s lawful uses.” Or something like that.

On that basis, Judge Failla recognized Plaintiffs’ claim that infringement occurred at both ends of the process: “first, by ‘copying a massive amount of Plaintiffs’ copyrighted works as inputs into its RAG index’; second, by providing consumers with outputs that ‘contain full or partial verbatim reproductions of Plaintiffs’ copyrighted articles’; and third, by ‘generat[ing] made-up text (hallucinations) … attribut[ed] … to Plaintiffs’ publications using Plaintiffs’ trademarks.’” In her jurisdictional analysis, Judge Failla stressed that these “inputs are significant because they cause Defendant’s website to produce answers that are reproductions or detailed summaries of Plaintiffs’ copyrighted works,” thus tying the alleged misconduct directly to Perplexity’s business activities in New York although she was not making a substantive ruling in this instance.

What is RAG and Why It Matters

Retrieval-augmented generation is a method that pairs two steps: (1) retrieval of content from external databases or the open web, and (2) generation of a synthetic answer using a large language model. Instead of relying solely on the model’s pre-training, RAG systems point the model toward selected source material such as news articles, scientific papers, legal databases and instruct it to weave that content into an answer.

From a user perspective, this can produce more accurate, up-to-date results. But from a legal perspective, the same pipeline can directly copy or closely paraphrase copyrighted material, often without attribution, and can even misattribute hallucinated text to legitimate sources. This dual role of RAG—retrieving copyrighted works as inputs and reproducing them as outputs—is exactly what made it central to Judge Failla’s opinion procedurally, but also may show where she is thinking substantively.

RAG in Frontier Labs

RAG is not a niche technique. It has become standard practice at nearly every frontier AI lab:

– OpenAI uses retrieval plug-ins and Bing integrations to ground ChatGPT answers.

– Anthropic deploys RAG pipelines in Claude for enterprise customers.

– Google DeepMind integrates RAG into Gemini and search-linked models.

– Meta builds retrieval into LLaMA applications and experimental assistants like Grok.

– Microsoft has made Copilot fundamentally a RAG product, pairing Bing with GPT.

– Cohere, Mistral, and other independents market RAG as a service layer for enterprises.

Why Dow Jones Matters Beyond Perplexity

Perplexity just happened to be first reported opinion as far as I know. The technical structure of its answer engine—indexing copyrighted content into a RAG system, then repackaging it for users—is not unique. It mirrors how the rest of the frontier labs are building their flagship products. What makes this case important is not that Perplexity is an outlier, but that it illustrates the legal vulnerability inherent in the RAG architecture itself.

Is RAG the Low-Hanging Fruit?

What makes this case so consequential is not just that Judge Failla recognized, at least for this ruling, that RAG is at least one mechanism of infringement, but that RAG cases may be easier to prove than disputes over model training inputs. Training claims often run into evidentiary hurdles: plaintiffs must show that their works were included in massive opaque training corpora, that those works influenced model parameters, and that the resulting outputs are “substantially similar.” That chain of proof can be complex and indirect.

By contrast, RAG systems operate in the open. They index specific copyrighted articles, feed them directly into a generation process, and sometimes output verbatim or near-verbatim passages. Plaintiffs can point to before-and-after evidence: the copyrighted article itself, the RAG index that ingested it, and the system’s generated output reproducing it. That may make proving copyright infringement far more straightforward to demonstrate than in a pure training case.

For that reason, Perplexity just happened to be first, but it will not be the last. Nearly every frontier lab such as OpenAI, Anthropic, Google, Meta, Microsoft is relying on RAG as the architecture of choice to ground their models. If RAG is the legal weak point, this opinion could mark the opening salvo in a much broader wave of litigation aimed at AI platforms, with courts treating RAG not as a technical curiosity but as a direct, provable conduit for infringement.

And lurking in the background is a bigger question: is Grokster going to be Judge Failla’s roundhouse kick? That irony is delicious. By highlighting how Perplexity (and the others) deliberately designed its system to ingest and repackage copyrighted works, the opinion sets the stage for a finding of intentionality that could make RAG the twenty-first-century version of inducement liability.