A coalition of NGOs, media associations, and publishers in Germany has filed a formal Digital Services Act (DSA) complaint against Google’s AI Overviews, arguing the feature diverts traffic and revenue from independent media, increases misinformation risks via opaque systems, and threatens media plurality. Under the DSA, violations can carry fines up to 6% of global revenue—a potentially multibillion-dollar exposure.

The complaint claims that AI Overviews answer users’ queries inside Google, short-circuiting click-throughs to the original sources and starving publishers of ad and subscription revenues. Because users can’t see how answers are generated or verified, the coalition warns of heightened misinformation risk and erosion of democratic discourse.

Why the Digital Services Act Matters

As I understand the DSA, the news publishers can either (1) lodge a complaint with their national Digital Services Coordinator alleging a platform’s DSA breach (triggers regulatory scrutiny); (2) Use the platform dispute tools: first the internal complaint-handling system, then certified out-of-court dispute settlement for moderation/search-display disputes—often faster practical relief; (3) Sue for damages in national courts for losses caused by a provider’s DSA infringement (Art. 54); or (4) Act collectively by mandating a qualified entity or through the EU Representative Actions Directive to seek injunctions/redress (kind of like class actions in the US but more limited in scope).

Under the DSA, Very Large Online Platforms (VLOPs) and Very Large Online Search Engines (VLOSEs) are services with more than 45 million EU users (approximately 10% of the population). Once formally designated by the European Commission, they face stricter obligations than smaller platforms: conducting annual systemic risk assessments, implementing mitigation measures, submitting to independent audits, providing data access to researchers, and ensuring transparency in recommender systems and advertising. Enforcement is centralized at the Commission, with penalties up to 6% of global revenue. This matters because VLOPs like Google, Meta, and TikTok must alter core design choices that directly affect media visibility and revenue.In parallel, the European Commission/DSCs retain powerful public-enforcement tools against Very Large Online Platforms.

As a designated Very Large Online Platform, Google faces strict duties to mitigate systemic risks, provide algorithmic transparency, and avoid conduct that undermines media pluralism. The complaint contends AI Overviews violate these requirements by replacing outbound links with Google’s own synthesized answers.

The U.S. Angle: Penske lawsuit

A Major Publisher Has Sued Google in Federal Court Over AI Overview

On Sept. 14, 2025, Penske Media (Rolling Stone, Billboard, Variety) sued Google in D.C. federal court, alleging AI Overviews repurpose its journalism, depress clicks, and damage revenue—marking the first lawsuit by a major U.S. publisher aimed squarely at AI Overviews. The claims include an allegation on training-use claiming that Google enriched itself by using PMC’s works to train and ground models powering Gemini/AI Overviews, seeking restitution and disgorgement. Penske also argues that Google abuses its search monopoly to coerce publishers: indexing is effectively tied to letting Google (a) republish/summarize their material in AI Overviews, Featured Snippets, and AI Mode, and (b) use their works to train Google’s LLMs—reducing click-through and revenues while letting Google expand its monopoly into online publishing.

Trade Groups Urged FTC/DOJ Action

The News/Media Alliance had previously asked the FTC and DOJ to investigate AI Overviews for diverting traffic and ‘misappropriating’ publishers’ investments, calling for enforcement under FTC Act §5 and Sherman Act §2.

Data Showing Traffic Harm

Industry analyses indicate material referral declines tied to AI Overviews. Digital Content Next reports Google Search referrals down 1%–25% for most member publishers over recent weeks; Digiday pegs impacts as much as 25%. The trend feeds a broader ‘Google Zero’ concern—zero-click results displacing publisher visits.

Why Europe vs. U.S. Paths Differ

The EU/DSA offers a procedural path to assess systemic risk and platform design choices like AI Overviews and levy platform-wide remedies and fines. In the U.S., the fight currently runs through private litigation (Penske) and competition/consumer-protection advocacy at FTC/DOJ, where enforcement tools differ and take longer to mobilize.

RAG vs. Training Data Issues

AI Overviews are best understood as a Retrieval-Augmented Generation (RAG) issue. Readers will recall that RAG is probably the most direct example of verbatim copying in AI outputs. The harms arise because Google as middleman retrieves live publisher content and synthesizes it into an answer inside the Search Engine Results Page (SERP), reducing traffic to the sources. This is distinct from the training-data lawsuits (Kadrey, Bartz) that allege unlawful ingestion of works during model pretraining.

Kadrey: Indirect Market Harm

A RAG case like Penske’s could also be characterized as indirect market harm. Judge Chhabria’s ruling in Kadrey under U.S. law highlights that market harm isn’t limited to direct substitution for fair use purposes. Factor 4 in fair use analysis includes foreclosure of licensing and derivative markets. For AI/search, that means reduced referrals depress ad and subscription revenue, while widespread zero-click synthesis may foreclose an emerging licensing market for summaries and excerpts. Evidence of harm includes before/after referral data, revenue deltas, and qualitative harms like brand erasure and loss of attribution. Remedies could include more prominent linking, revenue-sharing, compliance with robots/opt-outs, and provenance disclosures.

I like them RAG cases.

The Essential Issue is Similar in EU and US

Whether in Brussels or Washington, the core dispute is very similar: Who captures the value of journalism in an AI-mediated search world? Germany’s DSA complaint and Penske’s U.S. lawsuit frame twin fronts of a larger conflict—one about control of distribution, payment for content, and the future of a pluralistic press. Not to mention the usual free-riding and competition issues swirling around Google as it extracts rents by inserting itself into places it’s not wanted.

How an AI Moratorium Would Preclude Penske’s Lawsuit

Many “AI moratorium” proposals function as broad safe harbors with preemption. A moratorium to benefit AI and pick national champions was the subject of an IP Subcommittee hearing on September 18. If Congress enacted a moratorium that (1) expressly immunizes core AI practices (training, grounding, and SERP-level summaries), (2) preempts overlapping state claims, and (3) channels disputes into agency processes with exclusive public enforcement, it would effectively close the courthouse door to private suits like Penske and make the US more like Europe without the enforcement apparatus. Here’s how:

Express immunity for covered conduct. If the statute declares that using publicly available content for training and for retrieval-augmented summaries in search is lawful during the moratorium, Penske’s core theory (RAG substitution plus training use) loses its predicate.

No private right of action / exclusive public enforcement. Limiting enforcement to the FTC/DOJ (or a designated tech regulator) would bar private plaintiffs from seeking damages or injunctions over covered AI conduct.

Antitrust carve-out or agency preclearance. Congress could provide that covered AI practices (AI Overviews, featured snippets powered by generative models, training/grounding on public web content) cannot form the basis for Sherman/Clayton liability during the moratorium, or must first be reviewed by the agency—undercutting Penske’s §1/§2 counts.

Primary-jurisdiction plus statutory stay. Requiring first resort to the agency with a mandatory stay of court actions would pause (or dismiss) Penske until the regulator acts.

Preemption of state-law theories. A preemption clause would sweep in state unjust-enrichment and consumer-protection claims that parallel the covered AI practices.

Limits on injunctive relief. Barring courts from enjoining covered AI features (e.g., SERP-level summaries) and reserving design changes to the agency would eliminate the centerpiece remedy Penske seeks.

Potential retroactive shield. If drafted to apply to past conduct, a moratorium could moot pending suits by deeming prior training/RAG uses compliant for the moratorium period.

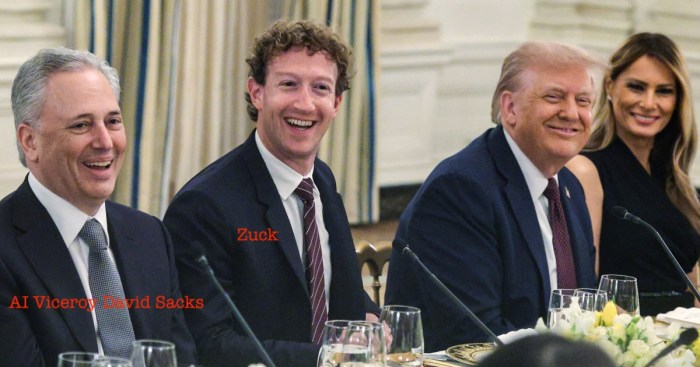

A moratorium with safe harbors, preemption, and agency-first review would either stay, gut, or bar Penske’s antitrust and unjust-enrichment claims—reframing the dispute as a regulatory matter rather than a private lawsuit. Want to bet that White House AI Viceroy David Sacks will be sitting in judgement?