The current narrative says the “AI subsidy era” is ending. Prices are rising. Rate limits are tightening. Ads are creeping in. Enterprise tiers are replacing all-you-can-eat plans. In short: users will finally start paying what AI actually costs.

Haydon Field writing in The Verge tells us:

Earlier this month, millions of OpenClaw users woke up to a sweeping mandate: The viral AI agent tool, which this year took the worldwide tech industry by storm, had been severely restricted by Anthropic.

Anthropic, like other leading AI labs, was under immense pressure to lessen the strain on its systems and start turning a profit. So if the users wanted its Claude AI to power their popular agents, they’d have to start paying handsomely for the privilege.

“Our subscriptions weren’t built for the usage patterns of these third-party tools,” wrote Boris Cherny, head of Claude Code, on X. “We want to be intentional in managing our growth to continue to serve our customers sustainably long-term. This change is a step toward that.”

The announcement was a sign of the times. Investors have poured hundreds of billions of dollars into companies like OpenAI and Anthropic to help them scale and build out their compute. Now, they’re expecting returns. After years of offering cheap or totally free access to advanced AI systems, the bill is starting to come due — and downstream, users are beginning to feel the pinch.

That’s true but it’s leaving out a lot.

Yes, the consumer subsidy—venture-backed underpricing of inference—may be winding down. But the broader subsidy system that made AI possible isn’t going away. It’s expanding. Just ask President Trump.

To understand why, you have to go back to the last great digital disruption.

From P2P to Streaming to AI

Start with Napster.

P2P didn’t just enable infringement. It rewired expectations. It taught users that all music should be available, instantly, for free. Why? Because there was gold in them long tails. Forget about supply and demand, we had infinite supply so demand would take care of itself.

Every artist, songwriter, label and publisher in the history of recorded music were not compensated for this shift. They were its involuntary financiers. Their catalogs created the demand, the network effects, and the user adoption that built the early internet music economy.

Streaming—think Spotify—didn’t reverse that logic. It formalized it. (Remember, streaming saved us from piracy and we should all be so grateful.) It actually transferred that involuntary financing from the p2p balance sheet to Spotify’s, and took it public.

Streaming platforms accepted a new baseline: the entire world’s repertoire must be available at all times, regardless of demand. That is a costly and structurally inefficient mandate, but it became the price of competing in a market shaped by P2P expectations. Licensing systems like the Mechanical Licensing Collective (MLC) were built to support that scale, but the underlying premise remained: total availability first, compensation second.

AI changes the game again.

AI Doesn’t Just Distribute Works. It Consumes Them.

P2P distributed music. Streaming licensed it. AI models ingest it.

That’s the critical difference.

Generative AI systems are trained on massive corpora that include copyrighted works, performances, and what we might call personhood signals—voice, style, tone, phrasing, and creative identity. These inputs are not just indexed or streamed. They are transmogrified (see what I did there) into model weights that can generate new outputs that compete with, mimic, or substitute for the originals.

So the role of the artist evolves:

• In P2P: unpaid distributor subsidy

• In streaming: underpaid inventory supplier

• In AI: uncompensated production input

That is not a marginal shift. It is a structural one.

The Real Subsidy Stack

When people say the “AI subsidy era is over,” they are usually talking about one thing: cheap access to compute.

But AI has always depended on a multi-layered subsidy stack:

Creators – supply training data, cultural value, and identity signals without compensation or consent

Users – supply prompts, feedback, and behavioral data that improve the models

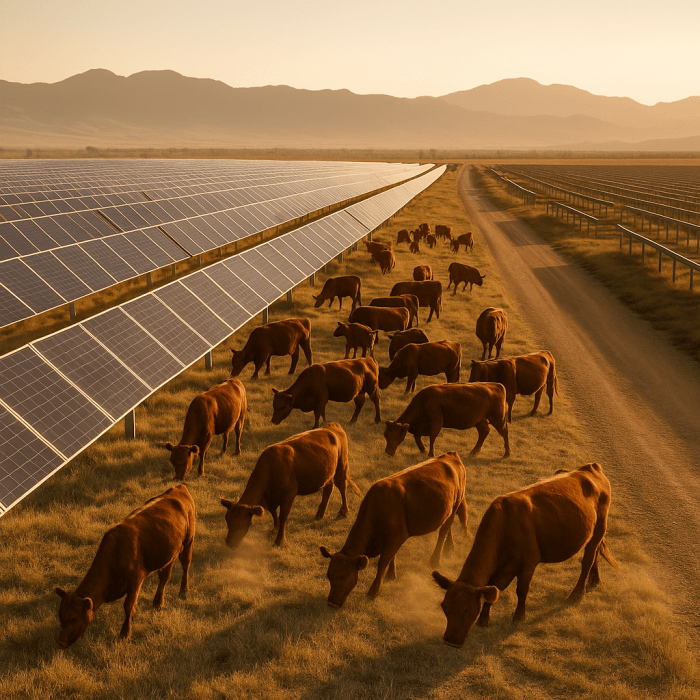

Communities – absorb land use, water consumption, and environmental costs

Ratepayers – fund grid upgrades, transmission, and reliability for data center demand

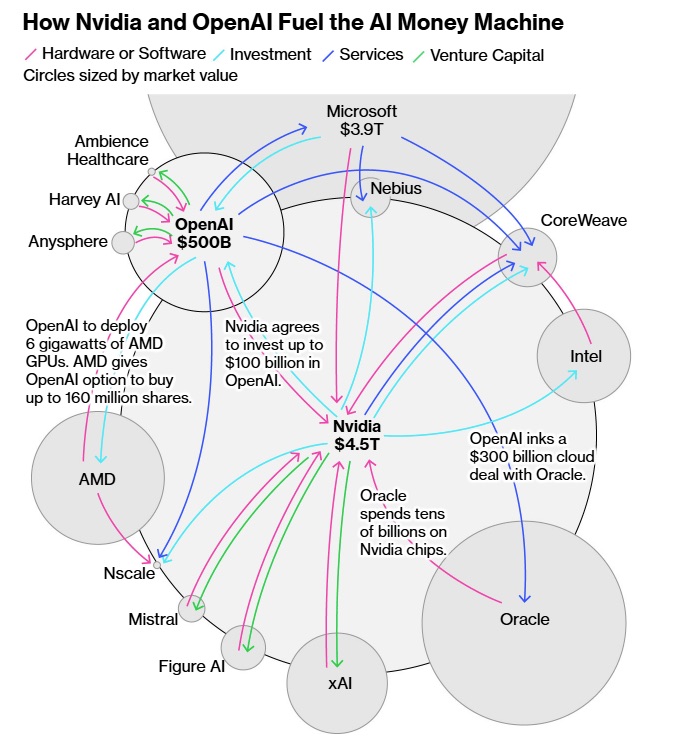

Venture capital – underwrites early losses to drive adoption and scale

The shift we are seeing now is not the end of subsidies. It’s a reallocation. Or as a cynic might say, it’s rearranging the deck chairs to hide the lifeboats.

Users may start paying more. But creators still aren’t being paid for training. Communities are still being asked to host infrastructure. And the physical footprint of AI is accelerating. Just ask President Trump.

The World Turned Upside Down

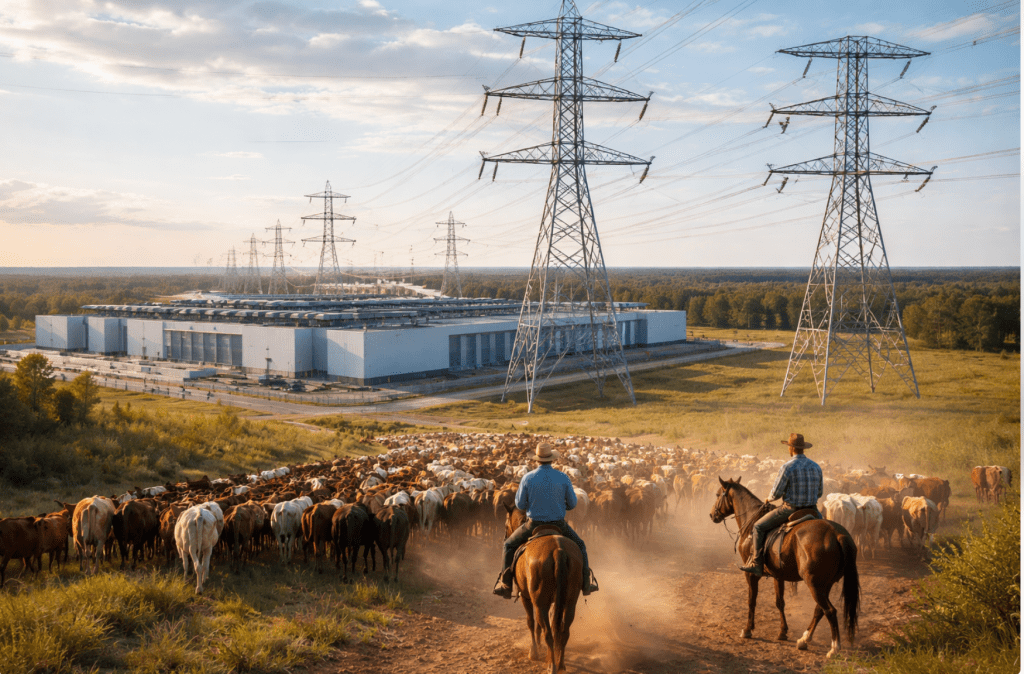

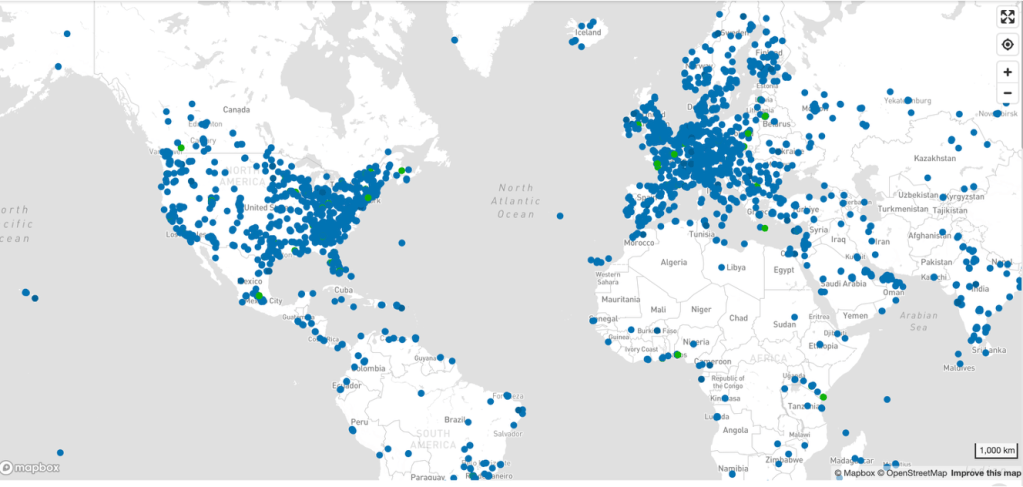

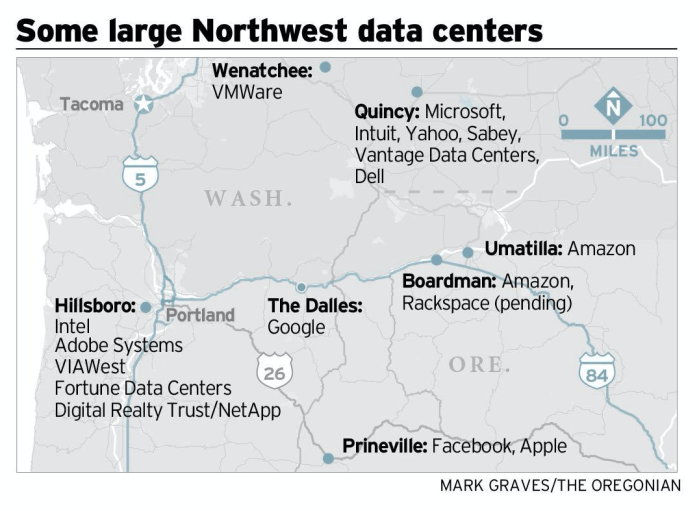

What makes this moment different is the scale of the buildout.

We are not just talking about apps anymore. We are talking about an industrial transformation:

• New data centers the size of small cities

• High-voltage transmission lines

• Water-intensive cooling systems

• Semiconductor supply chains

• And even discussions of new nuclear capacity to support compute demand

This is infrastructure on the scale of a national project, or more like national mobilization. But it is being built on top of a premise that has not been resolved: the uncompensated use of human creative work as training input.

That is the inversion: We are building power plants for systems that depend on not paying the people whose work makes those systems possible.

A Better Frame

The cleanest way to understand this is as a continuum:

P2P turned infringement into consumer expectation.

Streaming turned that expectation into platform infrastructure.

AI turns uncompensated authorship into industrial feedstock.

Or more bluntly:

The AI free ride is not ending. It is being re-invoiced. Users may now see higher prices. But the deeper subsidies—creative, environmental, and civic—remain off the books.

What Comes Next

If the industry is serious about “pricing AI correctly,” it cannot stop at compute.

It has to address:

• Compensation frameworks for training data

• Attribution and provenance standards

• Licensing models for style and voice

• Infrastructure cost allocation (who pays for the grid?)

• Governance of large-scale compute deployment

Otherwise, we are not exiting the subsidy era. We are doing what Big Tech lives for.

We are scaling it.

And this time, instead of a few server racks in a dorm room, we are building an global energy system around it.