For years, the political conversation around AI data centers followed a familiar script that was straight out of the Chamber of Commerce. Governors competed to announce the next hyperscale campus. Counties rezoned farmland and conservation land into heavy industrial corridors. Legislatures approved enormous tax abatements with little debate. Utilities promised “economic development.” And local officials were told that if they moved too slowly, some other state would take the project instead. Kind of like because China.

Residents in Crowell, Texas are being forced to live with constant artificial daylight because of Google’s AI data center that is being built right next to them. Residents report severe 24/7 light pollution that creates artificial daylight at night (photo proof shown)

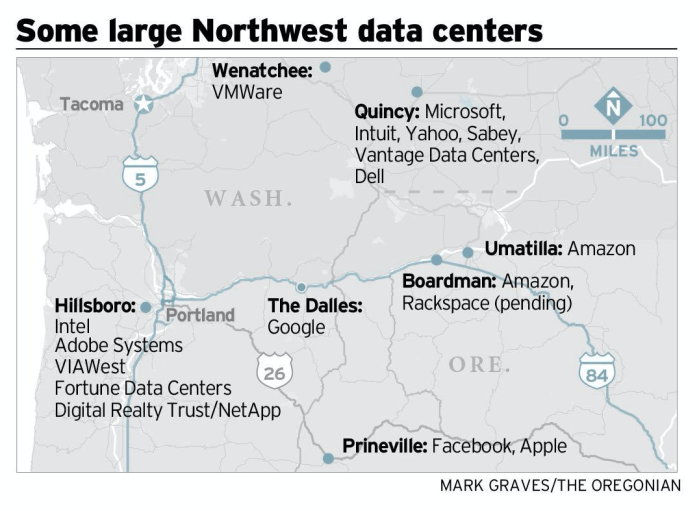

Why? Because even 10 years ago it was self-evidently true that there was no political opposition to Big Tech and nobody looked too hard at the reality of data centers in the places we had observable data like Oregon, for example. If they had, they would have known there was one thing that was absolutely true—data centers were not factories and they produced higher electric bills and fewer jobs. At least once the sugar high of construction had passed.

And speaking of jobs, in a November 2025 difference-in-differences study, economist Michael J. Hicks examined every data center opened in Texas and found zero statistically significant net employment effect — job gains in the data center sector were fully offset by losses in other industries, yielding an average treatment effect of roughly 46 workers per facility that the author concludes is “correctly interpreted as zero,” less than one-tenth the jobs generated by a single Walmart Supercenter.

Good Jobs First has found that the three states that have measured their data center return on investment lose 52 to 91 cents on the dollar, and in Virginia alone, the sales and use tax exemption for data centers consumed 81.3% of the state’s entire economic development incentives budget in FY 2024.

But it’s not just light pollution. Even though it was patently obvious that the massive data centers that were getting built in Louisiana, Georgia, Utah and Nevada were vastly larger than the already operating data centers in Oregon and were guaranteed to chew up the environment way more, nobody bothered to put 2 and 2 together and check how deep the foundations were compared to local aquifers.

That script is now breaking down. I’m shocked, said no one.

As we told the UK Intellectual Property Office:

We call the IPO’s attention to the real-world example of the U.S. State of Oregon, a state that is roughly the geographical size of the UK. Google built the first Oregon data centre in The Dalles, Oregon in 2006. Oregon now has 125 of the very data centres that Big Tech will necessarily need to build in the UK to implement AI. In other words, Oregon was sold much the same story that Big Tech is selling you today.

The rapid growth of Oregon data centres driven by the same tech giants like Amazon, Apple, Google, Oracle, and Meta, has significantly increased Oregon’s demand for electricity. This surge in demand has led to higher power costs, which are often passed on to local rate payers while data centre owners receive tax benefits. This increase in price foreshadows the market effect of crowding out local rate payers in the rush for electricity to run AI—demand will only increase and increase substantially as we enter what the International Energy Agency has called “the age of electricity”.

Portland General Electric, a local power operator, has faced increasing criticism for raising rates to accommodate the encroaching electrical power needs of these data centers. Local residents argue that they unfairly bear the increased electrical costs while data centers benefit from tax incentives and other advantages granted by government.

This is particularly galling in that the hydroelectric power in Oregon is largely produced by massive taxpayer-funded hydroelectric and other power projects built long ago. The relatively recent 125 Oregon data centres received significant tax incentives during their construction to be offset by a promise of future jobs. While there were new temporary jobs created during the construction phase of the data centres, there are relatively few permanent jobs required to operate them long term as one would expect from digitized assets owned by AI platforms.

Of course, the UK has approximately 16 times the population of Oregon. Given this disparity, it seems plausible that whatever problems that Oregon has with the concentration of data centers, the UK will have those same problems many times over due to the concentration of populations.

This message is getting through to elected officials around the world because citizens are freaking out.

Quietly at first, and then all at once, states and local governments across the country began pushing back. Some are freezing approvals entirely. Others are reconsidering billions in tax incentives. Some are demanding that data centers pay the real cost of the transmission infrastructure they require instead of socializing those costs onto ordinary ratepayers and anyone else who drinks water and breathes air.

This is no longer a niche zoning issue in Northern Virginia or some European bureaucratic nonsense. It is becoming a national political movement that has some real populist overtones worthy of a Brexiteer. According to the National Conference of State Legislatures (NCSL), at least 11 states have introduced statewide moratorium or ban legislation targeting data centers. Meanwhile, Good Jobs First reports more than 60 local moratorium efforts nationwidethat at least 14 states and scores of localities are failing to disclose tax abatement revenue losses they are suffering to data centers — even though they have been required to do so under Generally Accepted Accounting Principles (GAAP) since FY 2017.

The reasons vary by region as you’d suspect, but the themes are becoming remarkably consistent, many of which Artist Rights Institute raised in our comments on the US AI Action Plan and the UK IPO AI consultation:

• massive electricity demand;

• water consumption;

• transmission line expansion;

• opaque tax subsidies;

• industrialization of rural communities;

• secrecy surrounding the ultimate hyperscale users;

• and growing fear that ordinary households will subsidize AI infrastructure through higher utility bills.

What is striking is not merely the existence of resistance. It is the geographic breadth of it.

In Texas, lawmakers enacted new large-load interconnection rules while Hill County adopted a temporary construction pause and Agriculture Commissioner Sid Miller publicly called for broader scrutiny of data centers. In Virginia, long considered the unquestioned capital of the data center industry, legislators are openly debating whether to scale back tax exemptions that helped fuel “Data Center Alley.” In Illinois, Governor Pritzker proposed suspending new tax incentives entirely for two years.

Even places that aggressively courted data centers are beginning to hesitate.

In Reno, Nevada, officials adopted a pause on approving new data centers while they reevaluate land-use and infrastructure impacts. Duh. Ya think?

The Reno–Tahoe industrial corridor became a symbol of how quickly hyperscale development can transform an entire region once incentives and transmission infrastructure align. Nevada approved hundreds of millions in projected abatements over the last decade. Now local officials are asking whether the public actually understood the scale of what was being built. If you build it they will come, and they will take a huge dump in your backyard.

That same questions are emerging everywhere else: Who is the real end user? Who pays for the substations and 765-kV transmission lines? What happens if AI demand projections collapse halfway through construction? And why are local taxpayers subsidizing facilities that often employ surprisingly few permanent workers once operational? Well…not really surprisingly, but surprisingly if you believed the Chamber of Commerce hoorah.

The politics are changing because the physical footprint of AI is no longer abstract. The cloud is becoming visible. And you cannot bribe your way out of that one.

Residents now see the cooling towers. They see the transmission corridors. They hear the backup generators. In some communities they are learning about low-frequency industrial noise and infrasound issues that do not show up on ordinary decibel measurements. They see conservation land rezoned into industrial districts almost overnight. They see shell companies quietly assembling land while refusing to identify the ultimate hyperscale beneficiary.

Most importantly, they are beginning to understand that these projects are not temporary construction booms. They are permanent industrialization decisions. A 765-kV transmission corridor is not a pop-up startup. Neither is a hyperscale campus consuming as much electricity as a mid-sized city. And once the infrastructure is built, communities live with the consequences for generations.

The result is a new kind of political coalition that cuts across ideological lines. Environmental advocates, fiscal conservatives, rural landowners, grid-reliability hawks, and anti-subsidy activists are increasingly finding themselves on the same side of the debate. That does not mean the data center industry is stopping. Far from it. Billions are still flowing into AI infrastructure. Utilities continue planning enormous generation and transmission expansions. States remain eager for construction spending and property tax growth.

But the era of automatic approval is ending. The central political question is no longer whether AI infrastructure will expand. It is who bears the cost.

And there is another revealing development occurring at the federal level. What does it tell you that President Trump reportedly pulled back an executive-order framework that would have required certain AI labs to obtain government cybersecurity approval or clearance before launching advanced systems?

Whatever one thinks of the policy itself, the episode suggests intense behind-the-scenes conflict inside the administration and the AI industry over whether any meaningful federal guardrails should exist at all. Sources around Washington describe the push as a last-ditch effort by what critics derisively call the “Zombie AI Viceroy” David Sacks, the lobbyist who seemingly cannot be fired because the entire AI infrastructure race has become too politically and financially entangled. We will see whether federal safeguards reappear in another form. But at this moment, the practical reality is striking: the only governments actively imposing meaningful friction on AI infrastructure expansion are states, counties, and local municipalities.

State and Local Data Center Restriction / Tax Rollback Tracker (May 2026)

Alabama — Considering rules requiring data centers to bear infrastructure/grid costs

Arizona — Chandler pause; grid-cost proposals under consideration

California — Bills addressing ratepayer and environmental protections

Colorado — Denver moratorium; Larimer County pause; Logan County restrictions

Connecticut — Morris moratorium; Groton zoning restrictions

Florida — Enacted protections for local zoning authority and ratepayer safeguards

Georgia — HB 1059 introduced forbidding local permitting until December 2028; local pauses; estimated $2.5 billion per year in tax abatement revenue losses (highest in nation)

Illinois — Governor called for two-year pause of data center tax incentives

Indiana — Considering restructuring of tax incentive revenue sharing; fails to disclose data center costs despite ranking fifth-best in subsidy transparency nationally

Louisiana — New Orleans temporary moratorium

Maine — LD 307 moratorium on data centers over 20 MW (vetoed by Governor); local moratoria

Maryland — Proposed statewide approval restrictions (SB 931 / HB 1369)

Massachusetts — Lowell moratorium

Michigan — State moratorium proposals; Ypsilanti pause

Minnesota — Removed electricity sales tax exemption; created new annual energy-use fee; Minneapolis moratorium discussions

Nevada — Reno approval pause; growing tax-abatement controversy; Controller issues exemplary annual report of local revenue losses from state-awarded abatements

New Hampshire — HB 1265 one-year moratorium on data center construction (failed)

New Jersey — Millville ban/restrictions; prevailing wage requirement for data center construction (enacted February 2026)

New York — AB 10141 / SB 9144 statewide moratorium and Public Utility Commission rulemaking (introduced); Athens/Dryden/Mount Morris local restrictions

North Carolina — Chatham County moratorium; additional local reviews

North Dakota — Oliver County temporary moratorium activity

Ohio — Numerous local pauses; growing subsidy backlash

Oklahoma — SB 1488 moratorium until November 2029 (introduced); incentive rollback proposals

Oregon — Affordability/reliability proposals tied to large-load users

Pennsylvania — Moratorium discussions underway (HB 1370 introduced per NCSL)

South Carolina — SB 567 proposal to restrict approvals pending oversight framework (introduced)

South Dakota — SB 232 one-year statewide moratorium (introduced); local-control protections enacted

Texas — Large-load legislation; local moratoria and review fights; estimated $1 billion or more per year in tax abatement revenue losses; Hicks (2025) causal study found zero net job growth from data centers statewide

Vermont — S 205 proposed moratorium through 2030 with impact study requirement (introduced)

Virginia — HB 1515 prohibiting new approvals until interconnection requests fulfilled or July 2028 (continued); major debate over scaling back tax exemptions; estimated $1.94 billion per year in revenue losses; data center exemptions consumed 81.3% of state’s entire incentive budget in FY 2024

Washington — Restrictions tied to emissions-credit eligibility

Wisconsin — Moratorium proposal (status unverified; not listed in NCSL tracker)

The important point is not that every proposal will pass, which it may or may not. The important point is that resistance is no longer isolated. The backlash has become national. And resistance is not futile.